Most engineering recognition is generic. "Great job on the migration" tells the recipient nothing about what was specifically valuable. Shout is a side project that uses LLM-based evaluations to measure -- and improve -- recognition quality.

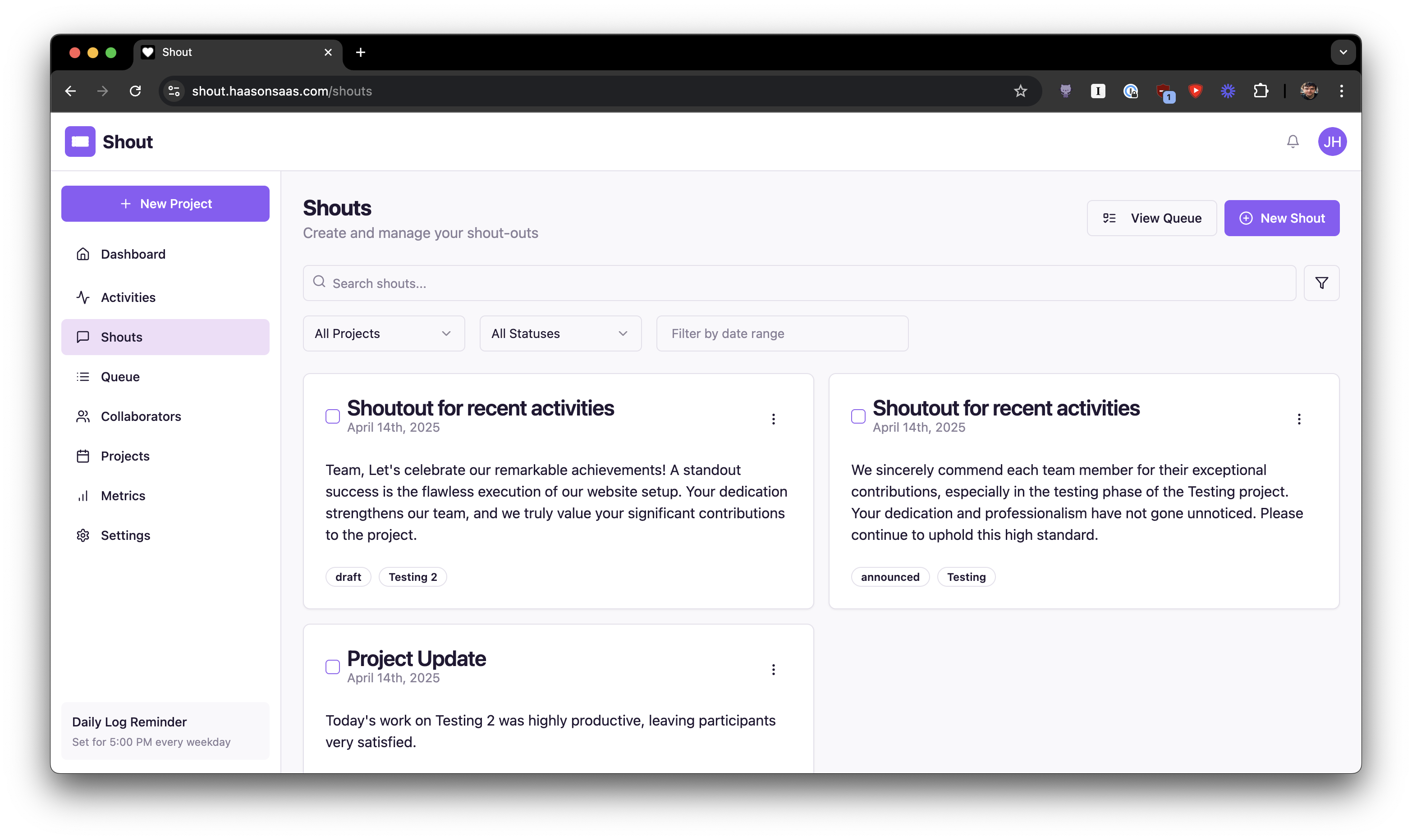

An early version of the Shout application

An early version of the Shout application

The Problem

Recognition matters for retention and morale. But most recognition messages fail on specificity: they praise the person without identifying what they did, why it was hard, or what impact it had. "Thanks for the great work" is noise. "Your auth refactoring cut DB load by 40% while maintaining sub-100ms response times during peak traffic" is signal.

The question was whether an LLM could reliably distinguish between the two.

The Evaluation Architecture

I adapted hallucination detection patterns from AI evaluation frameworks. Instead of checking whether an LLM output contains fabricated information, the evaluator checks whether a recognition message contains specific, verifiable details about the engineering work being recognized.

Three dimensions, each scored 0-1 with its own threshold:

- Specificity (0.7) -- Does the message reference concrete technical details?

- Accuracy (0.85) -- Are the technical claims correct? Highest bar because inaccurate recognition is worse than generic recognition.

- Impact connection (0.6) -- Does it connect the work to business or user outcomes? Most subjective, lowest threshold.

const results = await Promise.all([

evals.evaluateSpecificity(config, context),

evals.evaluateAccuracy(config, context),

evals.evaluateImpact(config, context),

]);

const weakestDimension = results.reduce(

(prev, current) => (current.score < prev.score ? current : prev)

);

Multi-dimensional scoring was the critical design choice. A single quality score masked failure modes -- recognition could be specific and accurate but completely miss the "why it matters" dimension. Breaking it apart made feedback actionable.

What I Learned About LLMs as Evaluators

Prompt specificity drives consistency. Early iterations produced wildly inconsistent scores because evaluation criteria were vague. Explicitly defining what each score means -- with examples at the boundaries -- reduced variance substantially.

Explanations are more valuable than scores. The natural-language explanations were the most useful output. They told me exactly what to improve: "The recognition mentions the migration but does not specify which systems were affected or quantify the performance improvement."

Smaller models work for some dimensions. Toxicity detection ran fine on GPT-3.5-Turbo. Accuracy evaluation needed GPT-4. Matching model capability to task complexity cut cost without sacrificing quality where it mattered.

LLMs are not deterministic. The same input produces different scores across runs. I tracked evaluation consistency over time in Postgres and found occasional drift. Designing for this -- using thresholds with margin rather than exact cutoffs -- was essential.

The Broader Pattern

The interesting finding was not about recognition specifically. It was that LLM-based evaluation systems work when you decompose a subjective quality judgment into specific, measurable dimensions with well-defined scoring rubrics. The same pattern applies to code review quality, documentation completeness, or any domain where "good" is hard to define but easy to recognize in examples.